| uCoz Community For Webmasters Site Promotion Indexing Policy & Robots.txt |

| Indexing Policy & Robots.txt |

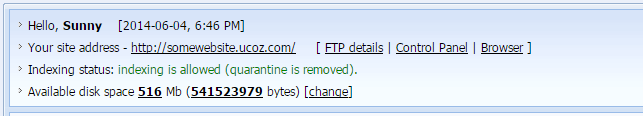

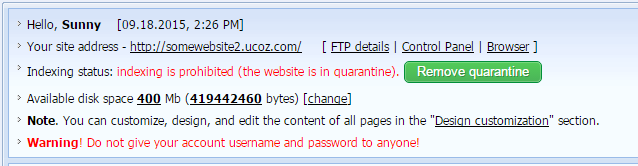

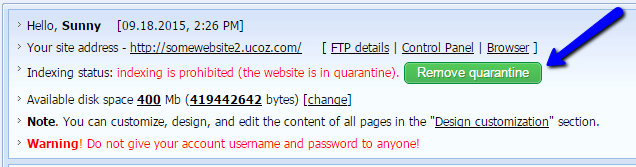

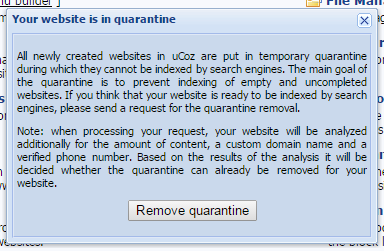

Website's Indexing Status All uCoz websites have Indexing status that is displayed at the top of the Control Panel's main page (/panel/?a=cp). The parameter shows whether indexing by search engines is allowed for the website or not (whether the website is in quarantine). The indexing status can show one of the two options: "indexing is allowed (quarantine is removed)":  Or "indexing is prohibited (the website is in quarantine)":  The status "indexing is prohibited (the website is in quarantine)" is assigned by default to all newly created websites. Quarantine Removal Policy A website can become available for indexing either automatically (if a premium plan is purchased) or upon the website owner's request. If the website does not have a premium plan and the user wants the quarantine to be removed, a request should be submitted from the website's Control Panel:  There will be a pop-up window with the info on the quarantine policy:  After the request has been submitted, the website will be checked automatically according to a number criteria: the website's age, presence of a custom domain name, content, verified phone number etc. On the basis of these criteria the system decides whether the quarantine should be removed. We cannot provide a more detailed description of the algorithm. Note! If the quarantine removal was denied, the next request can be submitted no sooner than in 7 days. Robots.txt A website's robots.txt file is located at http://your_website_address/robots.txt. A website with the default robots.txt is indexed in the best possible way – we set up the file in such a way that only pages with content are indexed, and not all existing pages (e.g. login or registration page). Therefore uCoz websites are indexed better and get higher priority in comparison with other sites where all unnecessary pages are indexed. That's why we strongly recommend not to replace the default robots.txt by your own. If you still want to replace the file by your own, create a text file using Notepad or any other text editor and name it "robots.txt". Then upload it to the root folder of your website via File Manager or FTP. Note: while website indexing is prohibited, no modification of the robots.txt file is possible. The default robots.txt looks as follows: Quote User-agent: * Allow: /*?page Allow: /*?ref= Allow: /stat/dspixel Disallow: /*? Disallow: /stat/ Disallow: /index/1 Disallow: /index/3 Disallow: /register Disallow: /index/5 Disallow: /index/7 Disallow: /index/8 Disallow: /index/9 Disallow: /index/sub/ Disallow: /panel/ Disallow: /admin/ Disallow: /informer/ Disallow: /secure/ Disallow: /poll/ Disallow: /search/ Disallow: /abnl/ Disallow: /*_escaped_fragment_= Disallow: /*-*-*-*-987$ Disallow: /shop/order/ Disallow: /shop/printorder/ Disallow: /shop/checkout/ Disallow: /shop/user/ Disallow: /*0-*-0-17$ Disallow: /*-0-0- Sitemap: http://forum.ucoz.com/sitemap.xml Sitemap: http://forum.ucoz.com/sitemap-forum.xml Robots.txt during the quarantine looks as follows: Quote User-agent: * Disallow: / Robots.txt FAQ Informers are not indexed because they display information that ALREADY exists. As a rule this information is already indexed on the corresponding pages. Question: I have accidentally messed up robots.txt. What should I do? Answer: Delete it. The default robots.txt file will be added back automatically (the system checks whether a website has it, and if not – adds back the default file). Question: Is there any use in submitting a website to search engines if the quarantine hasn't been removed yet? Answer: No, your website won't be indexed while in quarantine. Question: Will the robots.txt file be replaced automatically after the quarantine has been removed? Or should I update it manually? Answer: It will be updated automatically. Question: Is it possible to delete the default robots.txt? Answer: You can't delete it, it's a system file, but you can add your own file. However, we don't recommend to do this, as was stated above. During the quarantine it is impossible to upload a custom robots.txt. Question: What should I do to forbid indexing of the following pages? _http://site.ucoz.com/index/0-4 _http://site.ucoz.com/index/0-5 Answer: Add the following lines to the robots.txt file: /index/0-4 /index/0-5 Question: I have forbidden indexing of some links by means of robots.txt but they are still displayed. Why is it so? Answer: By means of robots.txt you can forbid indexing of pages, not links. Question: I want to make some changes in my robots.txt file. How can I do this?

Answer: Download it to your PC, edit it and then upload it back via File Manager or FTP. I'm not active on the forum anymore. Please contact other forum staff.

|

seLymmm, do not index this one zoomedia.at.ua Index the other one http://film.zoomizle.com/ because after attaching the domain indexing of zoomedia.at.ua is impossible. You can allow indexing website on both domain names: Control panel->Main » Common settings->Allow site indexing (by search engines) by both domains:

|

Tia, you are still on the quarantine if your site is not yet a month old and have no content. how old is your site?

Post edited by khen - Tuesday, 2010-12-07, 8:48 AM

|

Tia, have a look at the first post. This is the may robots look at quarantine This link might be helpful http://faq.ucoz.com/faq/0-0-29

|

Quote What is "UNetBot"? uCoz robot? Yes. how effective is this bot and is there a way i can know if it has vistted my site? |

Ok hi i uploaded my own robots.txt but how do i get it to switch with the old one?

Check Out Rservices & RandomAndroid at: http://http://www.randomness-fun.com/ Hosted by Ucoz also come come check out our official Android IRC chat room at: http://www.randomness-fun.com/index/rservices_irc_chat/0-34

|

upload your robot.txt to the file manager root .ie not inside any folder it will automaticaly replace the default robots.txt however i cant find a reason why you want a custom robots file coz the one in place is realy good

|

Mark-ucoz-co-uk,

Quote (Sunny) Robots.txt file is a system file. If you still want to substitute it by your own, create a text file using notepad or any other text editor and name it "robots.txt". Then upload it to the root folder of your site by means File Manager or FTP. Address of the robots.txt file is http://your_website_address/robots.txt

Quote (Mark-ucoz-co-uk) i uploaded my own robots.txt but how do i get it to switch with the old one? You don't. It is done automatically. |

| |||

Need help? Contact our support team via

the contact form

or email us at support@ucoz.com.